AI File Classification Accuracy: Why a 30% Miss Rate Breaks Your AI Pipeline

Enterprises benchmarking AI readiness are asking the wrong question. They want to know how accurate their models are. The question they should be asking is how accurately their files were classified before those models ever saw them.

- Type: Blogs

- Date: 07/04/2026

- Author: Data X-Ray

- Tags: PII classification, AI Readiness, Pre-ingestion Data Governance

What is File Classification Accuracy?

File classification accuracy measures how reliably a system identifies the content of an unstructured file: whether it contains sensitive data, what type it is, what policies apply to it. It is measured at two levels: document level (does this file contain PII or not) and entity level (did the system find every instance of every sensitive element within the file). Document-level accuracy determines whether a file should enter an AI pipeline. Entity-level accuracy determines whether everything inside it has been accounted for.

The Number Your AI Vendor is not Showing You

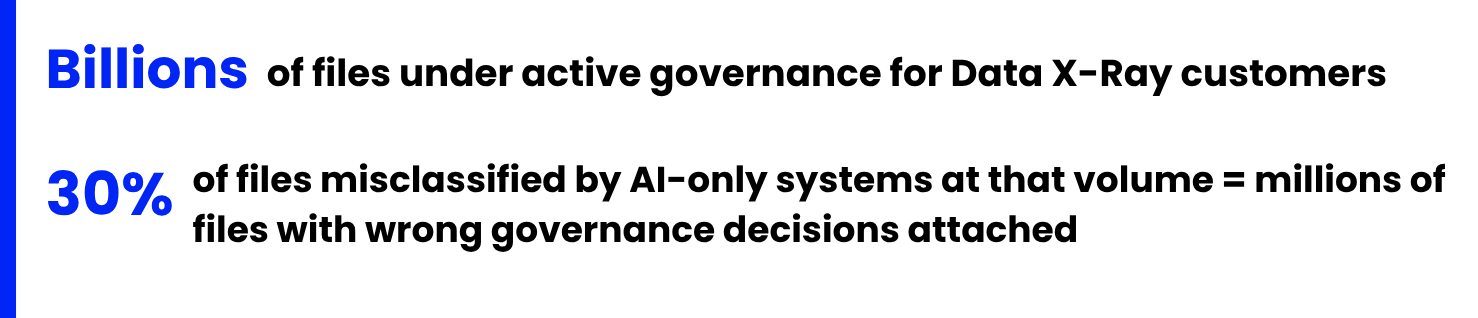

AI-only classification systems achieve around 70% accuracy on complex unstructured documents. Academic research on LLM-based document classification found GPT-3.5 achieved 70-74% accuracy on binary classification of complex research documents.1

Enterprise file estates are harder still: nested archives, scanned images, mislabelled formats, 100+ languages, and decades of accumulated ambiguity. The accuracy your vendor quotes was almost certainly not tested on your files.

70% sounds manageable until you apply it to scale.

At enterprise scale, a 30% miss rate is not a rounding error. It is the number of files entering your AI pipeline with the wrong sensitivity label, the wrong retention flag, or no classification at all.

Why AI-only Classification Falls Short on Enterprise Files

The problem is not that large language models are bad at reading documents. The problem is that enterprise files are not well-formed documents. They are the accumulated chaos of two or three decades of human behaviour inside systems that were never designed for governance.

What that looks like in practice:

Files that lie. An extension that says .pdf but contains scanned image data with no extractable text. A .docx that is actually a protected form. A CSV that has embedded freeform notes in a column nobody expected.

Files within files. A zip archive containing email exports containing attachments containing embedded spreadsheets. An AI classifier sees the outer container, not what is nested inside.

Scanned documents. An image of a page is not a document. Until OCR runs correctly, the AI has nothing to classify. OCR quality varies significantly across languages, scan quality, and document age.

Ambiguous content. A number that looks like a credit card number in one context is a product SKU in another. Pattern matching produces false positives. Context resolves them. AI without structure misses the context.

A pure LLM approach handles each of these cases worse than a system that was built specifically for file content. Which is why relying on AI alone for enterprise file classification is the wrong architecture for this problem.

What 98.7% Accuracy Looks Like and What it Requires

Data X-Ray's classification engine achieves 98.7% accuracy at the document level for PII and PCI detection on text-based files. That number is not a marketing claim. It is the output of a validated benchmark built on labelled corpora, tested against real enterprise document conditions, and reproducible.

It is also not magic. It is the result of a three-layer architecture that AI alone cannot replicate:

1. NLP and ML annotators. Identify content semantically. A name in a contract looks different to a name in an email signature. An account number in a financial report carries different classification weight than the same string in a draft. Semantic precision reduces false positives. This is the layer that makes classification defensible in a regulatory context.

2. GenAI and LLM categorisation. Evaluates the document as a whole. Is this a vendor contract, a board presentation, an HR file, or a research report? Full-text analysis at the document level, not keyword matching against individual fields.

3. Customer-defined rules. Your sensitivity thresholds, your retention policies, your business logic. A healthcare organisation's definition of sensitive is not the same as a bank's. Classification that cannot be configured to your rules is classification for someone else's data estate, not yours.

What Does This Look Like In Practice?

Regex finds a credit card number. AI guesses it is a financial document. A three-layer system knows it is an expired vendor contract with PCI data that should have been archived three years ago, flags it under your retention policy, and routes it out of your AI pipeline before ingestion. That is the difference between a number and a governance decision.

Why this has to Happen Before Ingestion, not After

This is the part that most AI readiness conversations skip entirely.

Classification is not a feature you add to an AI pipeline. It is a precondition for building one. Once a file has been ingested by an AI agent, the governance decision has already been made, whether you made it consciously or not.

An AI agent that surfaces a document containing PII belonging to a data subject who requested deletion eighteen months ago has already created a compliance event. It does not matter that the deletion was recorded in your system of record. The file existed. The agent found it. The output is evidence.

The only way to prevent that outcome is to classify files before they are in scope for ingestion. Not after the model has acted on them. Before.

This is why pre-ingestion classification is a prerequisite for the Enterprise Context Layer, not an add-on to it. Atlan's Enterprise Data Graph can govern, lineage-track, and serve context about your data assets, but only the assets that have been correctly classified before ingestion. A file that enters the pipeline with the wrong sensitivity label, or no label at all, arrives in the context layer with a governance gap already baked in. Data X-Ray's classification runs before that boundary, ensuring what Atlan governs is what was actually classified.

Why Classification Accuracy isn’t Set and Forget

One of the least-discussed challenges in enterprise file classification is that accuracy is not static. A file estate changes every day. New files arrive. Existing files are modified. Policy requirements shift as regulations evolve.

A classification system that delivers high accuracy at deployment does not maintain that accuracy passively. It requires ongoing review and correction: a mechanism for identifying where classifications were wrong, understanding why, and correcting the model without requiring manual rule rewrites across millions of files. In testing, the system reached 98.7% PII accuracy in four iterations.

Data X-Ray’s classification engine delivers high accuracy through a combination of models and rules. The system provides clear, auditable classification outputs so teams can review results and take corrective action where needed. This is architecturally significant for any enterprise running AI at scale: the accuracy your AI pipeline depends on today needs to be maintainable as your file estate evolves.

What this Means for Your AI Roadmap

If you are building an enterprise AI capability on top of unstructured file content, the classification question is not optional. It sits directly in the critical path between your file estate and your AI pipeline. The questions worth asking before you start:

- What percentage of your file estate has been classified to a standard your AI team would trust?

- At what accuracy level, and under what conditions, was that classification performed?

- Does your classification system operate across every environment your files live in, including on-premises and air-gapped systems?

- Can your classification accuracy be independently validated, or are you relying on a vendor's uncaveated percentage claim?

The answers to those questions determine whether your AI project will reach production or stall at the first governance review.

Data X-Ray classifies every file before it enters your AI pipeline, at 98.7% documented accuracy on PII and PCI detection. If you are evaluating AI readiness for your unstructured file estate, start with the classification question.

See where your file estate stands before your AI pipeline does.

Related Reading

Atlan Activate (April 29): The Enterprise Context Layer event bringing together data and AI infrastructure leaders. Data X-Ray is participating as a Context Layer Partner. https://atlan.com/activate/

Source:

1 Castro et al., "Large language models overcome the challenges of unstructured text data in ecology," ScienceDirect, 2024.https://www.sciencedirect.com/science/article/pii/S157495412400284X